Quick note: this article was originally published in November 2017. Several details mentioned are outdated, as are most of the parts reviewed here. While there is still a healthy ecosystem of 8-bit MCUs on the market, it’s hard to recommend starting new projects without considering modern Arm-based alternatives, which now offer similar pricing and power consumption — but with much better tooling and run-time performance. Check out The cheapest flash microcontroller you can buy is actually an Arm Cortex-M0+ as an example)

As an embedded design consultant, the diverse collection of projects on my desk need an equally-diverse collection of microcontroller architectures that have the performance, peripheral selection, and power numbers to be the backbone of successful projects. At the same time, we all have our go-to chips — those parts that linger in our toolkit after being picked up in school, through forum posts, or from previous projects.

In 2017, we saw several new MCUs hit the market, as well as general trends continuing in the industry: the migration to open-source, cross-platform development environments and toolchains; new code-generator tools that integrate seamlessly (or not so seamlessly…) into IDEs; and, most notably, the continued invasion of ARM Cortex-M0+ parts into the 8-bit space.

I wanted to take a quick pulse of the industry to see where everything is — and what I’ve been missing while backed into my corner of DigiKey’s web site.

It’s time for a good ol’ microcontroller shoot-out.

The Rules

While some projects that come across my desk are complex enough to require a hundreds-of-MHz microcontroller with all the bells and whistles, it’s amazing how many projects work great using nothing more than a $1 chip — so this is the only rule I established for this microcontroller review. ((To get technical: I purchased several different MCUs — all less than a $1 — from a wide variety of brands and distributors. I’m sure people will chime in and either claim that a part is more than a dollar, or that I should have used another part which can be had for less than a dollar. I used a price-break of 100 units when determining pricing, and I looked at typical, general suppliers I personally use when shopping for parts — I avoided eBay/AliExpress/Taobao unless they were the only source for the parts, which is common for devices most popular in China and Taiwan.))

I wanted to explore the $1 pricing zone specifically because it’s the least amount of money you can spend on an MCU that’s still general-purpose enough to be widely useful in a diverse array of projects.

Any cheaper, and you end up with 6- or 8-pin parts with only a few dozen bytes of RAM, no ADC, nor any peripherals other than a single timer and some GPIO.

Any more expensive, and the field completely opens up to an overwhelming number of parts — all with heavily-specialized peripherals and connectivity options.

These MCUs were selected to represent their entire families — or sub-families, depending on the architecture — and in my analysis, I’ll offer some information about the family as a whole.

If you want to scroll down and find out who the winner is, don’t bother — there’s really no sense in trying to declare the “king of $1 MCUs” as everyone knows the best microcontroller is the one that best matches your application needs. I mean, everyone knows the best microcontroller is the one you already know how to use. No, wait — the best microcontroller is definitely the one that is easiest to prototype with. Or maybe that has the lowest impact on BOM pricing?

I can’t even decide on the criteria for the best microcontroller — let alone crown a winner.

What I will do, however, is offer a ton of different recommendations for different users at the end. Read on!

The Criteria

This microcontroller selection guide will have both qualitative and quantitative assessments. Overall, I’ll be looking at a few different categories:

Parametrics, Packaging, and Peripherals

Within a particular family, what is the range of core speed? Memory? Peripherals? Price? Package options?

Some microcontroller families are huge — with hundreds of different models that you can select from to find the perfect MCU for your application. Some families are much smaller, which means you’re essentially going to pay for peripherals, memory, or features you don’t need. But these have an economies-of-scale effect; if we only have to produce five different MCU models, we’ll be able to make a lot more of each of them, driving down the price. How do different MCU families end up on that spectrum?

Package availability is another huge factor to consider. A professional electronics engineer working on a wearable consumer product might be looking for a wafer-level CSP package that’s less than 2×2 mm in size. A hobbyist who is uncomfortable with surface-mount soldering may be looking for a legacy DIP package that can be used with breadboards and solder protoboards. Different manufacturers choose packaging options carefully, so before you dive into an architecture for a project, one of the first things to consider is making sure that it’s in a package you actually want to deal with.

Peripherals can vary widely from architecture to architecture. Some MCUs have extremely powerful peripherals with multiple interrupt channels, DMA, internal clock generators, tons of power configuration control, and various clocking options. Others are incredibly simple — almost basic. Just as before, different people will be looking for different things (even for different applications). It would be a massive undertaking to go over every single peripheral on these MCUs, but I’ll focus on the ones that all MCUs have in common, and point out fine-print “gotchas” that datasheets always seem to glance over.

Development Experience

Any microcontroller review or selection guide should include a discussion of the overall development environment and experience.

While this is where things get subjective and opinion-oriented, I’ll attempt to present “just the facts” and let you decide what you care about. The main source of subjectivity comes from weighing these facts appropriately, which I will not attempt to do.

IDEs / SDKs / Compilers: What is the manufacturer-suggested IDE for developing code on the MCU? Are there other options? What compilers does the microcontroller support? Is the software cross-platform? How much does it cost? These are the sorts of things I’ll be exploring while evaluating the software for the MCU architecture.

Platform functionality and features will vary a lot by architecture, but I’ll look at basic project management, source-code editor quality, initialization code-generation tools, run-time peripheral libraries, debugging experience, and documentation accessibility.

I’ll focus on manufacturer-provided or manufacturer-suggested IDEs and compilers (and these will be what I use to benchmark the MCU). There are more than a dozen compilers / IDEs available for many of these architectures, so I can’t reasonably review all of them. Feel free to express your contempt of my methodology in the comments section.

Programmers / debuggers / emulators / dev boards: What dev boards and debuggers are available for the ecosystem? How clunky is the debugging experience? Every company has a slightly different philosophy for development boards and debuggers, so this will be interesting to compare.

Performance

I’ve established three different code samples I’ll be using to benchmark the parts in this microcontroller review; I’ll be measuring quantitative parameters like benchmark speed, clock-cycle efficiency, power efficiency, and code-size efficiency.

While(1) Blink

For this test, I’ll toggle a pin in a while() loop. I’ll use the fastest C code possible — while also reporting if the code generator tool or peripheral libraries were able to produce efficient code. I’ll use bitwise complement or GPIO-specific “toggle” registers if the platform supports it, otherwise, I’ll resort to a read-modify-write operation. I’ll report on which instructions were executed, and the number of cycles they took.

What this tests: This gives some good intuition for how the platform works — because it is so low-level, we’ll be able to easily look at the assembly code and individual instruction timing. Since many of these parts operate above flash read speed, this will allow us to see what’s going on with the flash read accelerator (if one exists), and as a general diagnostic / debugging tool for getting the platform up and running at the proper speed. This routine will obviously also test bit manipulation performance (though this is rarely important in general-purpose projects).

64-Sample Biquad Filter

This is an example of a real-world application where you often need good, real-time performance, so I thought it would be a perfect test to evaluate the raw processing power of each microcontroller. For this test, I’ll process 16-bit signed integer data through a 400 Hz second-order high-pass filter (Transposed Direct Form II implementation, for those playing along at home), assuming a sample rate of 8 kHz. We won’t actually sample the data from an ADC — instead, we’ll process 64-element arrays of dummy input data, and record the time it takes to complete this process (by wiggling a GPIO pin run into my 500 MHz Saleae Logic Pro 16 logic analyzer).

In addition to the samples-per-second measure of raw processing power, I’ll also measure power consumption, which will give us a “nanojoule-per-sample” measure; this will help you figure out how efficient a processor is. While I’ve traditionally used µCurrent units for this, I ended up using a Silicon Labs EFM8 Sleepy Bee STK — I ripped the target processor off the board, turning it into a $30 time-domain logarithmic power meter. If you’re interested in more information, check out my EFM8 review, which has the details of these excellent tools.

What this tests: Memory and 16-bit math performance per microamp, essentially. The 8-bit MCUs in our round up are going to struggle with this pretty hardcore — it’ll be interesting to see just how much better the 16 and 32-bit MCUs do. Like it or hate it, this will also evaluate a compiler’s optimization abilities since different compilers implement math routines quite differently.

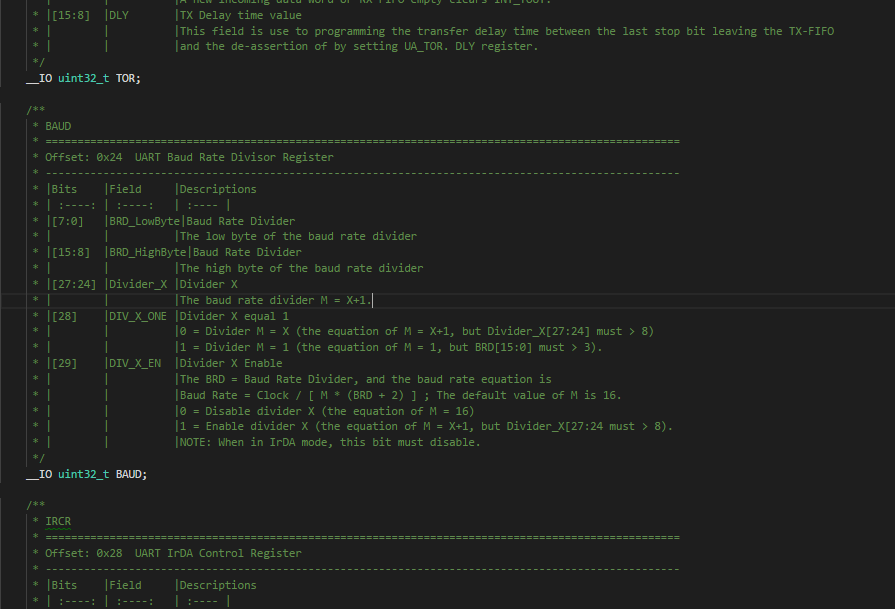

DMX-512 RGB light

DMX-512 is a commonly-used lighting protocol for stage, club, and commercial lighting systems. Electrically, it uses RS-485; the protocol uses a long BREAK message at the beginning, followed by a “0” and then 511 bytes of data, transmitted at 250 kbaud. In this test, I’ll implement a DMX-512 receiver that directly drives a common-anode RGB LED. I will do this with whatever peripheral library or code-generator tool the manufacturer provides (if any at all).

While you should really look for a precisely-timed break message, since this is only for prototyping, I’ll detect the start-of-frame by looking for an RX framing error (or a “break” signal, as some UARTs support LIN).

I’ll minimize power consumption by lowering the frequency of the CPU as much as possible, using interrupt-based UART receiver routines, and halting or sleeping the CPU. I’ll report the average power consumption (with the LED removed from the circuit, of course). To get a rough idea of the completeness and quality of the code-generator tools or peripheral libraries, I’ll report the total number of statements I had to write, as well as the flash usage.

What this tests: This is a sort of holistic test that lets me get into the ecosystem and play around with the platform. This stuff is the bread and butter of embedded programming: interrupt-based UART reception with a little bit of flair (framing error detection), multi-channel PWM configuration, and nearly-always-halted state-machine-style CPU programming. Once you have your hardware set up and you know what you’re doing (say, after you’ve implemented this on a dozen MCUs before…), with a good code-gen tool or peripheral library, you should be able to program this with just a few lines of code in an hour or less — hopefully without having to hit the datasheet much at all.

Test notes: I’m using FreeStyler to generate DMX messages through an FTDI USB-to-serial converter (the program uses the Enttec Open DMX plugin to do this). As my FTDI cable is 5V, I put a 1k resistor with a 3.3V zener diode to to ground, which clamps the signal to 3.3V. The zener clamp isn’t there to protect the MCU — all of these chips tested have diode catches to protect from over-voltage — but rather, so that the MCU doesn’t inadvertently draw power from the Rx pin, which would ruin my current measurement results.

Evaluation Code

All code I used to evaluate these parts in this microcontroller selection guide is available on my microcontroller-test-code GitHub repo. Check it out, fork it, submit patches, and keep me honest — if your results differ from mine, let’s get this post updated.

This page will compare the devices, development tools, and IDEs all together. However, to prevent this article from getting overwhelmingly long, I’ve created review pages for each device that cover way more details about the architecture — along with more complete testing notes, different benchmarking variations, and in-depth assessment. If an architecture strikes your interest, you should definitely check out the full review below.

Part: ATtiny1616

The new-for-2017 tinyAVR line includes seven parts with XMEGA-style peripherals, a two-cycle 8×8 multiplier, the new UPDI one-wire debug interface, and a 20 MHz oscillator that should shoot some energy into this line of entry-level AVR controllers that was looking quite long in the tooth next to other 8-bit parts.

Part: ATmega168PB

The AVR earned its hobbyist-friendly badge as the first MCU programmed in C with open-source tools. The “B” version of the classic ATmega168 takes a price cut due to a die-shrink, but little else has changed, including the anemic 8 MHz internal oscillator — and, like the tinyAVR, must sip on 5V to hit its full 20 MHz speed.

Part: ATSAMD10D14A

Atmel is positioning their least-expensive ARM Cortex-M0 offering — the new SAM D10 — to kill off all but the smallest TinyAVR MCUs with its performance numbers, peripherals, and price. Stand-out analog peripherals capstone a peripheral set and memory configuration that endows this part with good value.

Part: CY8C4024LQI

Reconfigurable digital logic — the PSoC’s claim to fame — is absent from this entry-level 24 MHz Arm part that also sports average analog features, and no support for Cypress’s handy “it just works” capacitive-touch hardware. Other than its unique development environment, this part treads water in a sea of low-cost ARM devices.

Part: MKE04Z8VTG4

Freescale introduced the Arm Cortex-M0 KE04 to kill off 8-bit MCUs — and with 2.7-5.5V support, tons of timers and analog options, it’s a step in that direction. Processor Expert provides a unique development experience for rapid-prototyping, which may be enough to lure some developers away from newer parts.

Part: MKL03Z8VFG4

While the KE series attacks the 8-bit sector with bells and whistles, the KL series focuses on being some of the lowest-power Arm parts on the market, with good low leakage performance in sleep mode. I’m testing this 48 MHz Arm part inside of NXP’s MCUXpresso, which recently added support for the newer Kinetis devices.

Part: HT66F0185

A basic 8-bit microcontroller with a slow, 4-cycle PIC16-style single-accumulator core. An anemic peripheral selection and limited memory capacity makes this a better one-trick pony than a main system controller. Holtek has a wide range of application-specific MCUs that integrate this core with HV power and other goodies.

Part: XMC1100T016X0016

Infineon Arm chips are common picks for control projects, and the new XMC1100 is no different. With 16K of RAM, a 1 MSPS six-channel ADC, flexible communications, up to 16 timer capture channels, and the ability to form a 64-bit timer for large-range timing gives this part a bit of personality among entry-level Cortex-M0 microcontrollers.

Part: PIC16LF18325

Vying with the 8051 as the most famous microcontroller of all time, the latest PIC16 Five-Digit Enhanced parts feature improved peripheral interconnectivity, more timers, and better analog. Still driven by a sluggish core that clambers along at one-fourth its clock speed, the PIC16 has always been best-suited for peripheral-heavy workloads.

Part: PIC24F04KL100

An expensive 16-bit part that’s designed (and priced) to mirror the MSP430. While it has decent performance and power consumption, it’s hard not to look toward other parts — especially the PIC32MM — which offer better pricing, and can beat the PIC24 at everything other than deep-sleep current consumption.

Part: PIC32MM0064

The 32-bit MIPS-powered PIC32MM compares similarly with ARM controllers on a per-cycle basis, but doesn’t provide the same flexibility with tooling that ARM does. It’s a great part for 32-bit beginners, though, as it brings along PIC18/PIC24-style peripherals and fuse-based configuration, easing development.

Part: N76E003AT20

The N76 is a 1T-style 8051 that brings a few twists and useful additions to the basic set of ’51 peripherals. This MCU has a slower instruction timing versus the EFM8 or STC8, but it’s hard to complain about a well-documented, fully-featured MCU with North American support that you can buy with East Asia pricing.

Part: M052LDN

The M051 series is a high-value, 50 MHz Cortex-M0 with excellent timers and comms peripherals, a coherent, easy-to-use functional-oriented peripheral library, a relatively high pin-count, and utilitarian dev tools. The Achilles’ heel is the somewhat-limited IDE options, buggy software, and gross power consumption figures.

Part: LPC811M001JDH16

The LPC81x is famous among hobbyists for the LPC810 — an 8-pin DIP-package MCU. For everyone else, the LPC81x is an older, forgettable 30 MHz ARM that’s short on peripherals (it doesn’t even have an ADC). An easy-to-use function-oriented peripheral library, serial loader, and plenty of code examples on blog posts keep this part alive.

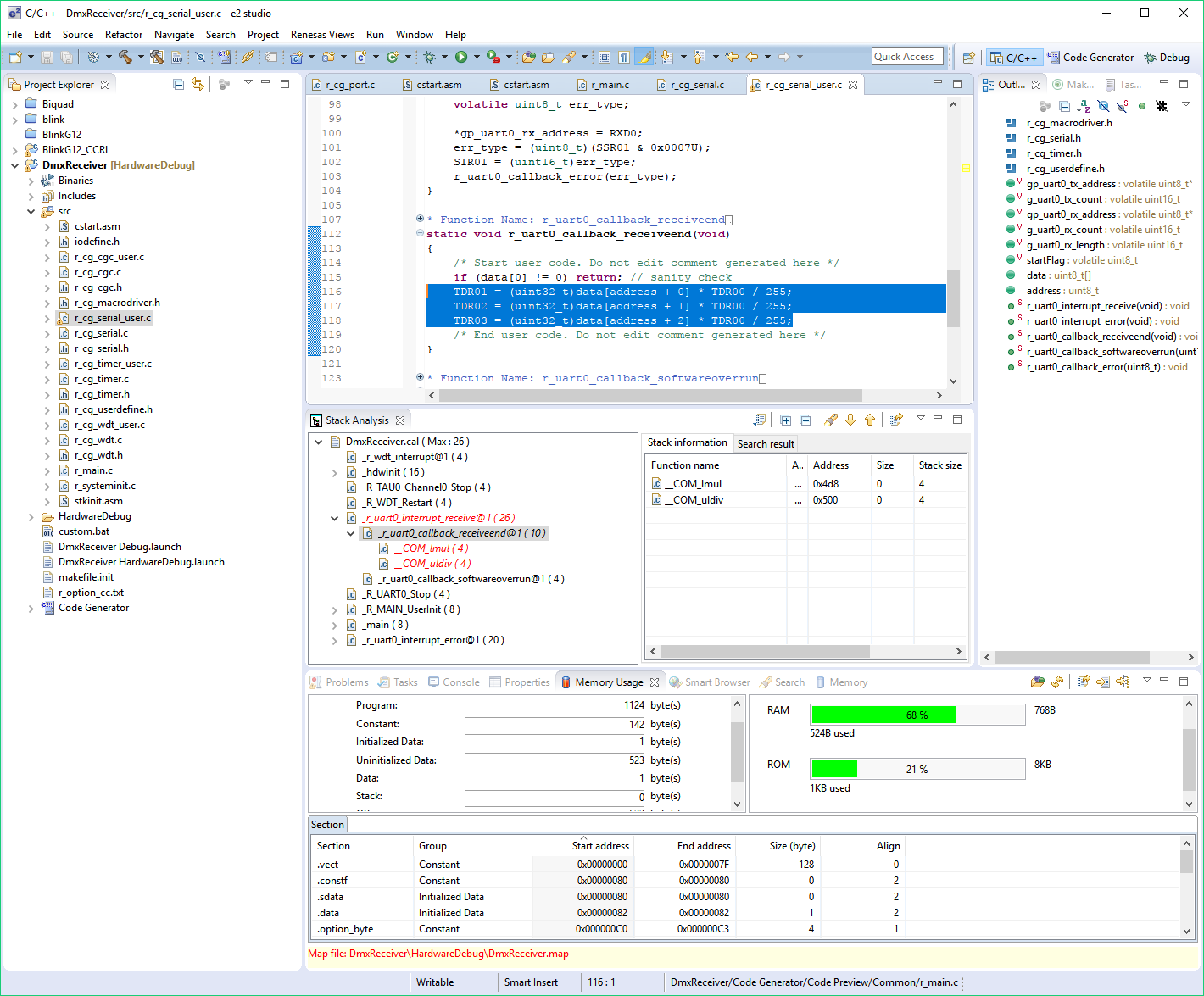

Part: R5F102A8ASP

With the RL-78, Renesas built a clever hybrid MCU with an 8-bit-wide data path and a 16-bit-wide ALU, balancing cost and performance. Excellent low-power consumption, arrayed comms and timer peripherals, plus a good code-gen tool built into the free Eclipse IDE makes this part a strong competitor against the PIC24 and MSP430.

Part: LC87F1M16

There’s not much to like in the LC-87. Abysmal power consumption, lackluster peripherals, unfriendly pricing, and an obnoxiously antiquated development ecosystem should steer away almost any rational person from this architecture that, from the copyright dates on the development tools, looks to be headed to the grave.

Part: EFM8LB11

The EFM8 Laser Bee is a snappy 72 MHz 8051 MCU that’s both the fastest 8-bit MCU in our round-up, as well as one of the lowest-power. Low-cost tools, a free cross-platform Eclipse-based IDE, and a slew of easy-to-program peripherals should get both professionals and hobbyists interested in exploring this platform.

Part: STM8S103F3P6

The STM8 feels like an ARM in disguise: a 32-bit-wide program memory bus with efficient compute performance, peripheral power gating, and a nested vector interrupt controller makes this thing look more like its STM32 big brothers. If only its STVD development environment felt as modern as its peripheral set does.

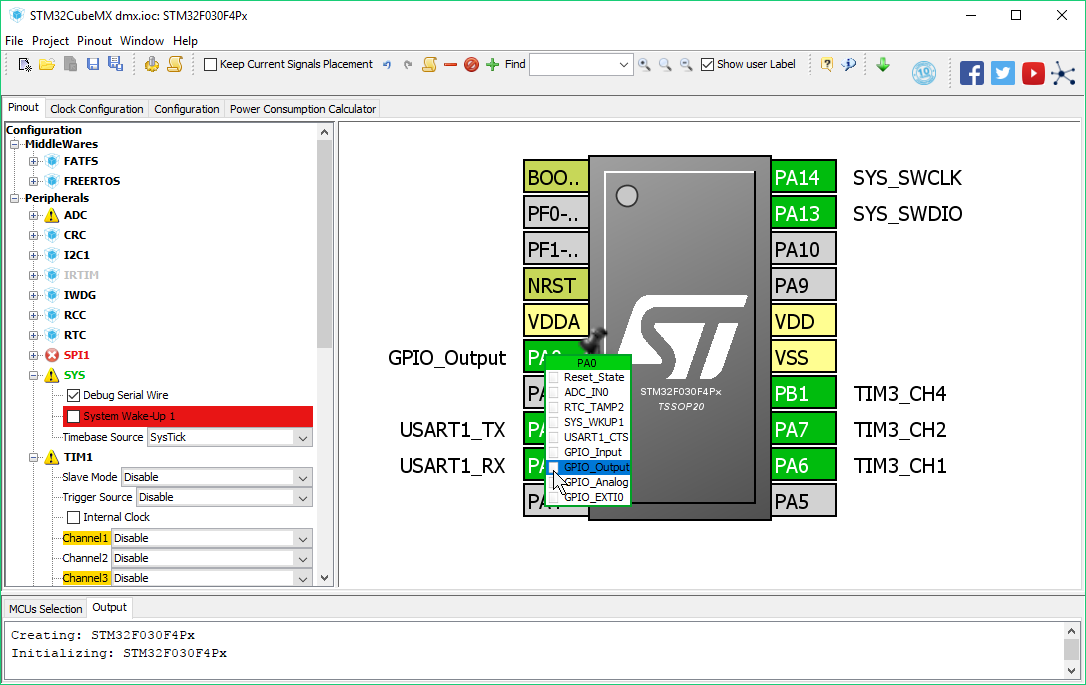

Part: STM32F030F4P6

While the F0 has an average peripheral set and worse-than-average power consumption, its low-cost ST-Link debugger, free IDE, good code-gen tools, and huge parametric latitude (up to the 180 MHz, 2 MB STM32F4) make this a useful family to learn — plus everyone seems to have an STM32 Discovery board laying around.

Part: STC8A8K64S4A12

A brand-new, single-cycle 8051 jam-packed full of flash, RAM, and oodles of peripherals — and a large, 64-pin package to make use of all these guts. Unfortunately, this part isn’t quite ready for prime-time: the datasheet hasn’t been translated into English yet, the errata is massive, and there’s limited availability of the part.

Part: MSP430FR2111

Texas Instruments dials down the power consumption in the latest iteration of the MSP430. FRAM memory, flexible power states, and tons of internal clocking options make this part a battery’s dream come true. You’ll pay for this power, though: the MSP430 can be twice as expensive as many 8-bit parts.

Core

Microcontrollers continue to divide into two camps — those with vendor-specific core architectures, and those who use a third-party core design. Out of the 21 microcontrollers reviewed here, eight of them use a 32-bit ARM core, which is becoming ubiquitous in the industry — even at this price point. Three of the microcontrollers use an 8-bit 8051-compatible ISA. The remaining ten use the vendor’s proprietary core design: six are 8-bit parts, three are 16-bit parts, and the PIC32MM is the sole 32-bit part that doesn’t use an ARM core.

AVR

The AVR core is a famous RISC design known for its clock-cycle efficiency — especially at the time it was introduced in 1997. I reviewed two microcontrollers with an AVR core — the tinyAVR 1-Series and the megaAVR.

The specific AVR instruction set and timing for both parts I reviewed is known as “AVRe” — this instruction set includes a two-cycle multiply and many single-cycle operations. Note that tinyAVR parts prior to the tinyAVR 1-Series are essentially completely different MCUs with a less-capable AVR core that has no multiplier.

The AVR core has a 16-bit instruction fetch width; most instructions are 16 bits wide; some are 32. Still, this is a RISC architecture, so the instruction set is anything but orthogonal; while there are 32 registers you can operate with, there are very few instructions for working directly with RAM; and of those 32 registers, I’d say that only 16 of them are true “general purpose” registers, as R0-R15 can’t be used with all register operations (load-immediate probably being the most important).

All things said, though, AVR offered a huge performance improvement over the 12-cycle or 6-cycle 8051 processors when AVR was first introduced — and the AVR is always faster than even modern 8051 derivatives when it comes to working with large arrays of data that must be stored in extended (16-bit) RAM on the 8051.

It was also designed for C compilers, too — with 32 registers available at all times, compilers can efficiently juggle around many operands concurrently; the 8051, by comparison, has four banks of eight registers that are only easily switched between within interrupt contexts (which is actually quite useful).

And interrupts are one of the weak points of the AVR core: there’s only one interrupt priority, and depending on the ISR, many registers will have to be pushed to the stack and restored upon exit. In my testing, this often added 10 PUSH instructions or more — each taking 2 cycles.

Another issue with AVR is the generally slow clock speed — even the high-end XMEGA AVR parts can only run at up to 32 MHz, with both the parts reviewed here topping out at 20 MHz. Compare that to the EFM8, of which is many varieties run at 48 MHz or higher (like the 72 MHz Laser Bee I reviewed). Even a 50%-better clock cycle efficiency doesn’t help much when the competition runs almost four times faster than the AVR.

Microchip PIC16

There’s something fundamentally goofy about almost all aspects of the PIC16 that make it seem, at first glance, completely bizarre that it is as popular as it is.

PIC16 uses an odd-ball 14-bit-wide program memory, yet it’s an 8-bit machine. This dramatically simplifies the core architecture: a 14-bit word can hold just enough data to specify every CPU instruction — with enough free space left in the word to address up to 128 registers or 2K of program memory (for the two jump/call routines).

Microchip calls the PIC16 a RISC machine since every PIC instruction (there’s just 49 of them) is precisely one word long((There’s considerable debate as to the precise definition of a “RISC architecture” is, but while the PIC16 has a single-word instruction length for all instructions, the PIC16 varies greatly from most RISC parts in that it is an accumulator-based machine, and has no working registers. I’ll leave it up to you to decide.)). The PIC16 is often described as a 4T architecture — taking 4 clock cycles to execute a single machine instruction. This isn’t entirely true, as the PIC16 takes an additional 4 cycles to fetch that instruction. Consequently, it’s actually an 8T machine, though it implements a pipeline scheme that allows each instruction to execute in 4 cycles — except jumps, which take 8.

Since real MCUs have more than 128 bytes of registers and 2K of program memory, this PIC has a bank selection register (BSR), which is written to whenever you need to swap banks (which happens a lot).

The PIC16 is a single-register machine, and that register is named W. Everything you do will essentially be moving something into W, doing something with it, and then moving it back to somewhere. Consequently, programming it in assembly is easy, and downright fun.

Because this part can store 8192 14-bit program words, Microchip will tell you this part has 14 KB of flash (close to 16 KB, right?), but users will tell you that it has 8K of program memory — 8192 words of memory — since storing an 8192-element byte array will occupy all 14 KB of its flash memory. Keep this in mind when comparing memory.

I have a longer write-up of PIC16 in the main PIC16 article.

Microchip PIC24

While the PIC10, 12, 16, and 18 are all 8-bit cores with 12-16 bit program memory, the PIC24 moves up to 16-bit data operated through 24-bit instructions (are you starting to catch onto the numbering system?)

While all the PICs before it were 4T machines, the PIC24 is 2T — that is, two clock cycles per instruction cycle.

The PIC24 has new indirect addressing modes that allow incrementing/decrementing and register-offset addressing, has a few more other instructions, and has three — instead of two — hardware breakpoints; but otherwise, the core is very much in the spirit of the PIC16.

The PIC24 carries the excellent power consumption figures that the PIC16 has, but many of the parts lack the clocking and oscillator options the MSP430 has (and apples-to-apples, the MSP430 is lower-power).

The dsPIC versions of these parts — which add DSP-friendly instructions — are popular for motor drivers, but it’s not clear that the PIC24 has more widely been the runaway success Microchip had hoped.

Microchip PIC32

While everyone was migrating their 8-bit proprietary cores to Arm, Microchip was gleefully popping out PIC parts. But in 2007, they finally decided to add a new microcontroller — the PIC32 — which uses a third-party, industry-standard 32-bit core. Instead of following everyone to the Arm ecosystem, they took a different turn: PIC32 parts use the MIPS architecture — specifically the M4K core.

MIPS built this core for single-chip MCU applications. M4K has 32 registers, a 5-stage pipeline, vectored interrupts and exceptions, bit-manipulation, and 16-bit instruction encoding support.

It is not the same as an Arm processor, but at the C application level, they are similar enough that any Arm developer should have no problems (other than the usual manufacturer-to-manufacturer peripheral differences).

You can program and debug the PIC32 using the same PGC/PGD/MCLR set-up you use on all other PIC parts — but there’s also support for JTAG, though the most popular JTAG debugger — the Segger J-Link — has limited support for the PIC32MX parts, and no support for the PIC32MM.

Arm Cortex-M0

The Arm Cortex-M0 ((Formerly ARM, but as of August 1, 2017, “Arm” is the capitalization style they now use.)) is a 32-bit RISC architecture that serves as the entry-level Arm architecture available to silicon vendors for microcontroller applications. Arm cores are designed by Arm Holdings and licensed to semiconductor manufacturers for integration into their products.

Arm started out as a personal computer microprocessor when Advanced RISC Machines formed a joint venture between Acorn, Apple, and VLSI Technology to manufacture 32-bit processors for the Acorn computer. While Arm cores have grown in popularity as microprocessors for battery-powered systems (they are almost certainly powering your smartphone), Arm moved into the microcontroller sphere as well — the ARM7TDMI-S was probably the first Arm core that was used in microcontrollers — i.e., processors with completely self-contained RAM, flash, and peripherals. The Atmel AT91 and ST STR7 were probably the first microcontroller parts designed with an Arm core.

It’s important to understand the history of Arm because it explains a serious feature of Arm microcontrollers that differs substantially from the 8051 (the other multi-vendor architecture that dominates the field): Unlike the 8051, Arm is just a core, not a complete microcontroller.

The ARM7TDMI-S didn’t come with any GPIO designs, or provisions for UARTs or ADCs or timers — it was designed as a microprocessor. Thus, as vendors started stuffing this core into their extremely high-end MCUs, they had to add in their vendor-specific peripherals to the AHB (AMBA((Advanced Microcontroller Bus Architecture — these multi-level acronyms are getting tedious)) High-performance Bus).

Consequently, Freescale used a lot of HC08 and ColdFire peripherals; while Atmel designed new peripherals from scratch. ST borrowed a bit from the ST7 (the precursor to the STM8) but used new designs for timers and communications peripherals.

Since many microcontroller projects spend 90% or more of the code base manipulating peripherals, this is a serious consideration when switching from one Arm MCU vendor to another: there’s absolutely zero peripheral compatibility between vendors, and even within a single vendor, their Arm parts can have wildly different peripherals.

Unlike other Arm parts, the M0 series only supports a subset of the 16-bit Thumb instruction set, which allows it to be about 1/3 the size of a Cortex-M3 core. Still, there’s a full 32-bit ALU, with a 32-bit hardware multiplier supporting a 32-bit result. Arm provides the option of either a single-cycle multiply, or a 32-cycle multiply instruction, but in my browsing, it seems as though most vendors use the single-cycle multiply option.

In addition to the normal CPU registers, Arm cores have 13 general-purpose working registers, which is roughly the sweet spot. The core has a nested vector interrupt controller, with up to 32 interrupt vectors and 4 interrupt priorities — plenty when compared to the 8-bit competition, but a far cry from the 240 interrupts at 256 interrupt priorities that the larger Arm parts support. The core also has full support for runtime exceptions, which isn’t a feature found on 8-bit architectures.

The M0+ is an improved version of the M0 that supports faster two-cycle branches (due to the pipeline going from three-stage to two-stage), and lower power consumption. There are a slew of silicon options that vendors can choose from: single-cycle GPIO, support for a simple instruction trace buffer called Micro Trace Buffer (MTB), vector table relocation, and a rudimentary memory protection unit (MPU).

One of the biggest problems with ARM microcontrollers is their low code density for anything other than 16- and 32-bit math — even those that use the 16-bit Thumb instruction set. This means normal microcontroller type routines — shoving bytes out a communication port, wiggling bits around, performing software ADC conversions, and updating timers — can take a lot of code space on these parts. Exacerbating this problem is the peripherals, which tend to be more complex — I mean “flexible” — than 8-bit parts, often necessitating run-time peripheral libraries and tons of register manipulation.

Another problem with ARM processors is the severe 12-cycle interrupt latency. When coupled with the large number of registers that are saved and restored in the prologue and epilogue of the ISR handlers, these cycles start to add up. ISR latency is one area where a 16 MHz 8-bit part can easily beat a 72 MHz 32-bit Arm microcontroller.

8051

The 8051 was originally an Intel microcontroller introduced in 1980 as one of the first widely-deployed 8-bit parts. The 8-bit modified Harvard core has a fully-orthogonal variable-length CISC instruction set, hardware multiplier and hardware divider, bit-addressable RAM and specific bit-manipulation instructions, four switchable banks of eight registers each, two-priority interrupt controller with automatic register bank-switching, 64 KB of both program and extended RAM addressability, with 128 bytes of “scratch pad” RAM accessible with fast instructions.

The 8051 was actually a specific part — not a family — but its name is now synonymous with the core architecture, peripherals, and even package pin-out ((the 8051 is a member of a family officially called the “MCS-51” — along with the 8031, 8032, 8051, and 8052 — plus all the subsequent versions that were introduced later)).

The original had 4K of ROM ((Expensive windows ceramic packages allowed EPROM programming for developers, but production units were mask-ROM or OTP — or, in the case of the 8031, only external ROM was supported.)), 128 bytes of RAM, four full 8-bit GPIO ports (32 I/O total), a UART, two or three timers, and a two-priority interrupt system.

The 8051 has a fully orthogonal CISC instruction set, which means you can do nearly any operation with immediate, direct, or indirect operands, and you can do these operations in RAM, registers, or the A accumulator.

Many vendors built direct, drop-in compatible clones of the 8051, and by the time Intel discontinued its MCS-51 products in 2007, barely anyone noticed — these third-party parts evolved from mimicking Intel functionality to outright beating them with tons of additional timers, peripherals, and special-purpose functionality.

Because of its small core and fast interrupt architecture, the 8051 architecture is extremely popular for managing peripherals used in real-time high-bandwidth systems, such as USB web cameras and audio DSPs, and is commonly deployed as a house-keeping processor in FPGAs used in audio/video processing and DSP work.

Old-timers associate the 8051 with “old and slow” because the original was a 12T microcontroller — each machine cycle took 12 clock cycles to complete. Since there was no pipelining, every instruction byte took a machine cycle to fetch, plus one or more additional machine cycles to execute — altogether, it could take more than 50 clock cycles to execute a given instruction. Ouch.

No need to worry about that anymore, though: all the modern 8051-style MCUs available are 1T processors, and many of them have a pipelined core, meaning many instructions take the same number of clock cycles to execute as the instruction’s length. Consequently, in many 8051 implementations, operations in A (the accumulator) are the fastest, followed by the register bank, and then RAM.

I ended up with three different 8051-compatible microcontrollers end the lineup: the Nuvoton N76, the Silicon Labs EFM8 Laser Bee, and the STCmicro STC8.

From a pure core design standpoint, the STC8 is probably the most interesting — while I can’t find documentation to confirm this, it appears that STCmicro uses a 24-bit (or more) parallel instruction-fetch size. This means that a huge number — more than 80% — of instructions can be executed in a single cycle, which is a substantial step up from all other 8051s on the market these days.

However, the EFM8, from Silicon Labs’ C8051 lineage, can hit much higher clock speeds — topping out at 72 MHz — making it the fastest part I reviewed, by core speed.

I’ll be looking more at this in the performance section of the review.

STM8

The STM8 core has six CPU registers: a single accumulator, two index registers, a 24-bit program counter, a 16-bit stack pointer, and a condition register. The STM8 has a Harvard architecture, but uses a unified address space. There’s a 32-bit-wide program memory bus which can fetch most instructions in a single cycle — and pipelined fetch/decode/execute operations permit many instructions to execute in a single cycle.

The claim to fame of the core is its comprehensive list of 20 addressing modes, including indexed indirect addressing and stack-pointer-relative modes. There’s three “reaches” for addressing — short (one-byte), long (two-byte), and extended (three-byte) — trading off memory area with performance.

This is the only architecture in this round-up that has this level of granularity — all the other chips are either RISC-style processors that have lots of general-purpose registers they do their work in, or 8051-style CISC parts that manipulate RAM directly — but pay a severe penalty when hitting 16-bit address space. The STM8 manages these trade-offs in an efficient manner.

Peripherals

Use the tabs below to compare precise specs across families.

The chart above illustrates the differences in core clock speed among each MCU. As will be seen in the evaluation section, core clock speed is not a good predictor of performance when comparing between different MCU families (especially between 8-, 16-, and 32-bit parts). However, most MCUs limit the maximum peripheral clock rate to that of the CPU, which may be a driving factor if your application requires fast peripheral clocks (say, for fast GPIO bit-banging or for high-speed capture/compare timer operations). The Infineon XMC1100 is a neat exception to this rule — its peripheral clock can run at up to 64 MHz.

There are other important asterisks to this data: the Atmel tinyAVR and megaAVR parts have severely limited operating ranges when running below 5V, which will affect most modern designs. The tinyAVR can only run at 10 MHz below 3.6V, and at 5 MHz below 2.2V. The megaAVR has the same speed grades, but even worse, has nothing faster than an 8 MHz internal oscillator. When talking about sub-$1 MCUs, adding a crystal or even low-cost ceramic resonator adds a sizable portion of the cost of the MCU to the BOM.

The Silicon Labs EFM8 Laser Bee, with its 72 MHz core clock speed, beats out even the ARM microcontrollers in this round-up. The Sanyo LC87 brings in a 12 MHz reading — but bear in mind this is a 3T architecture, which limits the actual instruction clock speed to 4 MHz. The Holtek HT66 and Microchip PIC16 are both 4T architectures, but the PIC16 has a relatively snappy 32 MHz core speed (thanks to its on-board PLL), which allows it to compete better with 8 MHz parts.

Here, we consider flash capacity in terms of bytes — but be aware that flash usage varies considerably by core. The PIC16 may have 14 KB of flash, but being a 14-bit-wide core, it will feel more like it has 8 KB of flash when it comes time to actually storing and manipulating data, and counting instructions. The Holtek HT66 is similar to the PIC16, but with a 16-bit-wide fetch. The same goes for the PIC24, which has a 24-bit-wide flash data path. While other cores may have a wider-than-a-byte fetch size, they still allow instructions and data to be packed into flash efficiently.

The STC8’s insane equipment list starts to shine — with 64 KB of flash, this part should be able to do essentially anything you throw at its space-efficient 8051 core. On the other side of the spectrum, the ARM processors were especially stingy with flash capacity, which is important to consider for applications that rely on a lot of peripheral runtime libraries: as we’ll see in the performance evaluation, many of these parts had little room left over after the test code was programmed onto them. The Microchip PIC24’s 4 KB flash capacity makes the part essentially unusable for general-purpose applications that would usually target a 16-bit controller, as it can only hold 1408 instructions in flash (due to its 24-bit-wide word fetches).

Infineon’s memory configuration is actually “backwards” — it has 8 KB of flash, and 16 KB of RAM. It doesn’t have a good flash pre-fetch engine, so performance-critical code should be moved to RAM for fast execution. While you’re at it, unless you’ve got some large data capturing/analyzing procedures, you could just move your entire program into RAM, too.

The KE04 (and probably the PIC24) both have too little RAM for most projects that would target these architectures. I’m actually less worried about the Holtek HT66; it’s efficient peripheral library essentially uses no RAM, and 256 bytes of user data is plenty of space for an ultra-low-power MCU that’s designed for only the most basic duties.

Each MCU’s review page discusses its complement of timers, but I wanted to come up with a score to help quickly compare timer peripherals across MCUs — I call it TimerMark.

Here’s how it works:

Basic Timer Blocks

- 1 point for 8-bit counters

- 2 points for 8-bit auto-reload (period) timers

- 2 points for 16-bit counters

- 4 points for 16-bit auto-reload timers

- 6 points for 24-bit auto-reload timers

- 8 points for 32-bit auto-reload timers

- 2 points for RTC

- 2 points for each 16-bit timer set that can be extended to 32-bit

Capture Channels & PWM

- 1 point for each capture channel (regardless of depth)

- 1 point for each 8-bit PWM channel

- 2 points for each 16-bit PWM channel

- 3 points for each 24-bit PWM channel

- 3 points for each arbitrary-phase PWM channel (i.e., if the channel has separate ON and OFF comparator registers

- 2 points if the PWM module is “power friendly” (multi-channel outputs with programmable dead-time, blanking, etc)

The chart above illustrates the total number of communications resources each microcontroller has.

Most vendors use a separate, dedicated module for UART, SPI, and I2C communication. Nuvoton’s M0 went a step further and doubled up everything — two UARTs, two SPI, and two I2C. Except under heavily-loaded buses, or under extremely strange designs, there’s rarely a need for multiple SPI or I2C buses, so this decision struck me as a bit odd.

STC‘s decision to incorporate four separate UARTs in addition to an SPI and an I2C module seems to be more useful, since UARTs are almost always dedicated to a particular peripheral. Doubling-up on UARTs was a popular decision overall: the other 8051s — the EFM8 and the N76 — bring a second UART, as do the PIC16 and PIC32MM, along with the LPC811.

I also like the flexibility that Atmel, Cypress, Infineon, and Renesas have — they use arrayed “serial units,” each can morph into a UART, SPI, or I2C peripheral. Cypress and Infineon have two; Atmel and Renesas have three (plus a fourth I2C-slave-only interface for Renesas).

The odd ones out were the PIC32MM, the LC87, and the MSP430: none of these MCUs have an I2C peripheral — a curious omission in an era of SMBus-complaint digital sensors everywhere. And unlike the 8051’s quasi-bidirectional GPIO configuration, none of these MCUs are well-suited to bit-banging I2C (though it’s obviously possible by switching data-direction).

All the reviewed parts had an ADC except the PSoC 4000S, LPC811, and the Microchip PIC24 (though these peripherals are available on more expensive members of this family).

Most of the 8 and 16-bit parts had 10-bit ADCs, while the 32-bit parts had 12-bit resolution. Some 8-bit vendors offered 12-bit (Holtek HT66, STCmicro STC8), but the stand-out is the Silicon Labs EFM8, which has a 14-bit ADC — the highest resolution in our round up — and also has 20 channels, tying the tinyAVR and Sanyo LC87.

The PIC16 brought in the highest channel count — it has 24 total inputs and can sample at 470 ksps.

For speed, the EFM8 brings 900 ksps to the table — beat out only by ST and Infineon whose ADCs can clock at up to 1 Msps.

Other vendors turned in results hovering in the 500-800 ksps range.

The Atmel ADCs were the slowest, with the megaAVR’s sluggish 15 ksps (no “0” missing) far below everyone else. Even their 32-bit ARM only turned in a 350 ksps reading — the slowest ARM part tested.

DAC

In this price range, it is unusual to find parts with DACs, but in this microcontroller review, five parts had this functionality. The EFM8 has a two-channel 12-bit DAC — the highest resolution in my round-up. The SAM D10 has a single-channel 10-bit DAC, while the tinyAVR 1-Series part has three channels with 8-bit resolution. The PIC16 has a basic, 5-bit DAC (oddly, with two separate outputs — even though they’re generated from the same DAC). The Freescale KE04 also had a basic 6-bit DAC that is pumped directly into a comparator (where these low-speed, low-resolution DACs are usually used).

Parametric Reach

One of the major themes of this microcontroller selection guide is to show how easy it can be to get going with different parts, and comfortably jump around among ecosystems — picking the best part for the job.

But for casual hobbyists who may live far away from major distributors, and professionals who have to meet tight timelines, sometimes there’s no time to play around with new architectures.

If you want to commit to a single architecture, it’s important to know which one gives you the most headroom to move up. I created a fictious “times better” score by comparing the the part tested with the best part available in the same ecosystem — this usually means fairly comparable peripheral programming, along with identical development tools. I multiplied the core speed, package size, flash, and RAM capacities together, ratioed the two parts, and then took the quartic root. Essentially, if every parameter is double, it is considered “2.0 x” as powerful.

Surprisingly, the PIC32 came out on top: there are four PIC32 families — the PIC32MX was the first; it’s the mainstream core that runs up to 120 MHz, with up to 512 KB of flash and 128 KB of RAM.

But it’s the newer PIC32MZ that reaches even higher: up to 252 MHz, with 2 MB of flash, and — with the DA version of the part — includes 32 MB of onboard DDR2 memory. With the MZ-DA, you can build complex graphical apps without needing an application processor running Linux (and the PCB / BSP complexity that arrives with that). It’s essentially the PIC32 version of the Arm Cortex-M7.

Next up, the STM32 line. The STM32F0 has a famous big brother — the STM32F4 — that’s one of the most capable Arm Cortex parts ever built. Several versions run up to 180 MHz, with 2 MB of flash and up to 364 KB of RAM (in the case of the STM32F469).

But the brand-new STM32F7 — part of the new Cortex-M7 line of parts — goes even further, with 216 MHz maximum operating frequency, 2 MB of flash, and 512 KB of RAM.

The LPC811 — one of the lower-performing parts in my round-up — has several big sisters, including the LPC546xx series, a giant Cortex-M4 with 220 MHz max frequency, 512 KB of flash, 200 KB of RAM, in up to 208-pin packages.

The tinyAVR in this review has very little headroom — these devices top out at 16 KB of flash, 2 KB of RAM, 20 MHz, and 24-pin QFN packages; however, 32 KB tinyAVR parts are soon to be released.

The megaAVR has a bit of reach in pinning and memory. The ATmega3290 keeps the 20 MHz clock speed but bumps up the pin-count to 100 pins. There are many megaAVR parts with 64 KB of flash and 4 K of RAM, as this part has. Some megaAVR parts have as much as 16 KB of RAM or 256 KB of flash. Oddly, Atmel can’t seem to combine these specs — there is no 100-pin, 256 KB flash, 16 KB RAM megaAVR that is in current production.

The SAM D10 extends up to the SAM D21, which maintains its 48 MHz clock speed, but increases flash up to 256 KB, with up to 32 K of RAM, and sizable 64-pin package options.

There’s a lot of headroom left in the SAM Arm microcontroller ecosystem, but if you leave the D1x/D2x line of parts, you’ll lose familiarity with some of the peripherals (especially the communications interfaces). Having said that, the Arm Cortex-M7-based ATSAMS70 will get you up to 300 MHz of performance, 2 MB of flash, and 384 KB of RAM, in up to 144-pin packages. The older A revision parts are deeply discounted, including this 64-pin 1 MB flash part for $5.14 on DigiKey.

With the PSoC 4000S, you’re at rock-bottom in the PSoC ecosystem, so the only direction is up — the PSoC 5 devices run at 80 MHz, with up to 256 KB of flash and 64 KB of RAM in 100-pin packages. These parts come with all the PSOC goodness that Cypress users love — reconfigurable digital logic, lots of analog features, and excellent capacitive-touch sensing.

The just-around-the-corner PSoC 6 promises to bring even more performance to the ecosystem, with the PSoC 63 running a 150 MHz Arm Cortex-M4F, 1 MB of flash, 288 KB of RAM, integrated BTLE connectivity, and 100+ pin packages.

The Freescale KE04 and KL03 are both entry-level devices in the E and L families within the Kinetis system. The E family has good reach up to the 168 MHz KE1x, with up to 512 KB of flash, 64 KB of RAM, and up to 100-pin packaging. Unfortunately, this is a fairly different process than the KE04 reviewed here — there’s no Processor Expert support, and the communications peripherals are quite different than those in the lower-end part. It retains its 5V operating range, which segments it into the E family.

The KL03 extends up to the KL28 — a 72 MHz Cortex-M0+ with 512 KB of flash, 128 KB of RAM, and up to 121-pin BGA packages available. Unlike the E series, the KL series has much more uniform peripherals across its range of devices.

The Holtek HT-66 has no real latitude above the HT66F0185 — but as mentioned on the review page, there are tons of application-specific products Holtek makes that use this core.

The Infineon XMC1000 family extends up to the XMC1400, with 200 KB of flash, 16 K of RAM, a slightly-faster 48 MHz core clock, and a 64-pin package. Moving out of the XMC1000 ecosystem, the XMC4000 keeps the XMC1000 peripherals and swaps out the core for a Cortex-M4F, running up to 144 MHz, with 2 MB of flash, 352 KB of RAM, and up to 196-pin packaging options.

The PIC16 tops out in the 64-pin PIC16F19197 device, with 56 KB of flash (well, 32 K words, because PIC), 4 K of RAM. I’ll include PIC18 devices, though, as they’re targetted with the same compiler, programmed with the same debugger, and share peripherals and architectural decisions.

PIC18 devices can reach up to the PIC18F97J60 — a 100-pin beast with 128 KB of flash (64 K words), and almost 4K of RAM. While most of these 8-bit parts have similar peripherals across the board, I must note the Ethernet MAC and PHY present in the PIC18F97J60. While many higher-end microcontrollers have an Ethernet MAC, this low-end PIC18 part is one of the only microcontrollers — at any price — to also integrate a PHY ((The only other mainstream MCU that has an integrated Ethernet PHY is the $14 Tiva-C TM4C129x, a giant 128-pin 120 MHz Arm Cortex-M4 from Texas Instruments. There are a few other (albeit odd) choices out there: Freescale’s legacy ColdFire microcontrollers include the MCF5223X, which has an integrated Ethernet PHY. Fabless designer ASIX manufacturers the AX11015, a 100 MHz 8051 with an integrated Ethernet PHY)).

The PIC24FJ1024GA610 supports up to a 1024 KB of flash, 32 KB of RAM, and a 32 MHz run speed — though there are dsPIC devices, like the dsPIC33EP512GM604, with up to 140 MHz operating frequency, 512 KB of flash, and 48 KB of RAM.

The Nuvoton N76 can look up to the 40 MHz W79E658A, with 128 KB of flash, 100-pin packaging, and 1.25K of RAM.

The Nuvoton M051 has a few Cortex-M4 big sisters: the M505 has 2 MB of embedded SPI flash (sounds slow to me), and 128 KB of RAM, runs at 100 MHz, and comes in a 48-pin package. Nuvoton hasn’t released the M487 yet, but it promises a 192 MHz CPU with 512 KB of flash, 160 KB of RAM, and at least 80-pin package options (though Nuvoton hasn’t unveiled all details yet).

Renesas RL-78 extends up to the R5F101SLAFB, with 128-pin package, 512 KB of flash, 32 KB of RAM, and a slightly-faster 32 MHz clock speed.

Sanyo’s LC87 extend up to 100-pin 256 KB parts with 12 KB of RAM, and an 18 MHz clock with the LC87F7NP6AUE.

The Silicon Labs EFM8LB1 is the top-of-the-line part in the EFM8 family — these top out at 64 KB of flash, 4.25 KB of RAM, and a relatively-small 32-pin package.

Having said that, you can stay in the Silicon Labs 8051 family with the C8051F120 — a 100 MHz 8051 with 128 KB of flash, 8.25 KB of RAM, and a 100-pin microcontroller. It’s an older part, but it’s still supported in Simplicity Studio (though not in Simplicity Configurator).

The STC8 I tested is the top-of-the-line part in their catalog.

The MSP430 extends up to the MSP430F6779, with 512 KB of flash, 32 KB of RAM, and 128-pin packaging.

The development ecosystem of a microcontroller has a profound impact on productivity and ease of use of the part, and these IDEs, peripheral libraries, dev boards, and debuggers varied wildly among the microcontrollers reviewed here.

Development Environments

Eclipse

Eclipse is a Java-based IDE originally developed at IBM to develop in Java. But since 2001, it has been an open-source project built by the Eclipse Foundation.

Eclipse CDT provides C/C++ tooling, and has taken off like wildfire in the embedded world — starting in the 32-bit ARM ecosystems, but migrating down to 16- and 8-bit parts as well. In fact, almost all the major microcontrollers here are programmed in an IDE based on Eclipse:

- NXP Kinetis KE04 uses Kinetis Design Studio

- NXP Kinetis KL03 uses MCUXpresso (or Kinetis Design Studio)

- Infineon XMC1100 uses DAVE

- Nuvoton M051 uses CooCox CoIDE

- NXP LPC811 uses MCUXpresso

- Renesas RL-78 uses e2 studio

- Silicon Labs EFM8 uses Simplicity Studio

- ST STM32F0 uses System Workbench for STM32

- Texas Instruments MSP430 uses Code Composer Studio

Other than DAVE, CooCox, and e2 studio (which all only run on Windows), all of these toolchains have cross-platform support for Windows, macOS, and Linux.

Main features of Eclipse CDT include multi-project workspaces, debugging with tons of introspection windows, support for different toolchains, plus a syntax-highlighting text editor with content assist text completion, macro definition browsing and highlighting, code snippets, and tons of refactoring capabilities.

The code editor in Eclipse is definitely a stand-out among IDEs tested — especially if you’re coming from other IDEs. Everything is completely customizable, very snappy, and full of features. The pop-up “Source Hover” is one of my favorite features: hover over a function, and the source of the function (including any docs) will pop-up immediately. If you’re still not sure about something, move your mouse down into the pop-up window and it turns into a scrollable editor window, allowing you to see the entire contents of the function (and copy-and-paste from it). One feature request: I would love to see Ctrl-Click working from within this pop-up, and it would also be amazing to see editing capability, too.

Vendor Customizations

The GNU MCU Eclipse plug-ins have made it trivial to set up an Eclipse-based workflow when working on many ARM processors; under the hood, some of these IDEs are basically just pre-packaged open-source components with a nice splash screen. This is definitely the case for Kinetis Design Studio, MCUXpresso, and System Workbench for STM32 (which didn’t even bother changing the Eclipse logo). There’s nothing wrong with that — it’s far less jarring to move between stock Eclipse IDEs, and if you’re short on hard drive space, you could probably install and configure plug-ins to essentially combine many of these IDEs together.

On the other hand, some vendors had to go through great lengths to get an Eclipse-based environment working with their parts. Silicon Labs had to write a debug interface from scratch that could communicate with their tools (and work with Keil C51 binaries), custom property panes for managing the build system — along with packaging a patched WINE system that can run Keil C51 seamlessly on macOS and Linux (and from my testing, they pulled it off).

In fact, one IDE — CoIDE — is so far removed from Eclipse, I hesitated to even mention it in this list. CooCox essentially stripped Eclipse down to its base, and built up CoIDE saving little more than the Eclipse shell.

What results is an IDE that is extremely easy to use — great for students and hobbyists who many find the Eclipse project properties pane to be… well, a pain. This comes at the expense of flexibility, however — tons of the debugging options and windows are missing, and you can only open one project at a time. Sometimes the IDE does thing you may not want. For example, every folder you create in a project is automatically added to the list of project source files as well as include files. I imagine this will quickly cause filename conflicts in large projects (which CoIDE doesn’t seem built for).

Vendors like Freescale decided to stop heavily customizing Eclipse with their own proprietary debugging system (which they did in CodeWarrior), and switch to these open-source plug-ins. I have mixed feelings about this change, as CodeWarrior seemed much faster at starting and stopping debug sessions than the GDB-based system everyone uses these days.

On performance overall, recent versions of Eclipse (Mars or better) seem to be much snappier than the disastrous Juno release, so if it felt slow and bloated last time you tried it, you may want to give it another shot.

Oxygen (4.7.0) was just released in June, so the newest IDEs — System Workbench, MCUXpresso, and Code Composer Studio — and are still on Neon (4.5). Other Eclipse-based IDEs are on older versions — with Kinetis Design Studio and DAVE being on the oldest release (Luna SR2 — 4.4.2).

Debugging in Eclipse

Eclipse — across nearly all vendors — provided the best out-of-the-box experience out of all the IDEs I tested. Projects support multiple debug configurations that allow you to use different debuggers and target configurations. Out of the box, you get a source code view with interactive breakpoints, a memory browser, a disassembly view, and a list of core CPU registers.

Debugging in Eclipse is relatively unified across the platforms — the biggest differences are the supported debuggers, and the custom debugging windows — especially the peripheral register view.

In my opinion, the peripheral register viewer is one of the most important debug windows — even more important than the disassembly view. My favorite register viewers are the ones used in TI’s Code Composer Studio and SiLabs’ Simplicity Studio. These have a contiguous list of all registers, organized in a tree view, with detailed descriptions of each register’s value, with combo-box selectors of all the values. Simplicity Studio edges out Code Composer Studio by naming all the constants. This is sometimes unnecessary (like: SYSCLK_DIV_12 (System clock divided by 12) ), but definitely keeps your eyes out of the datasheet for the part.

Kinetis Design Studio uses EmbSysRegView — an open-source Eclipse plugin — which provides similar functionality. This uses SVD, a standardized set of tools for generating header files and descriptions of such.

System Workbench for STM32 has a similar view, but doesn’t automatically fetch the register values. This may not be a bad thing, as ARM microcontrollers tend to have a lot more register addresses than 8-bit parts, but it still feels a bit clunkier.

MCUXpresso and Infineon DAVE have a somewhat-strange two-step process — you select the peripheral in one view, and it creates a memory rendering. Registers are broken out logically into one or more bits, but the drop-down lists don’t have named enumerations that describe what each bit-pattern does.

DAVE has exactly the same plugin as MCUXpresso, but it seems buggy — it doesn’t always work. Hopefully this gets fixed in a future version, because is severely limits the usability of the debug system.

CoIDE and e2 studio both have the worst register views — they simply display a list of the peripheral register whole values, without breaking them up logically or annotating them with text.

Other features

Some vendors have gone above and beyond with useful (and not-so-useful) additional views and features. e2 studio, despite the pesky peripheral register viewer, has a useful real-time view of variables that update while debugging. I suspect the IDE is periodically breaking the MCU, reading the contents of RAM, and updating the display — but they may have a mechanism for real-time tracing in the RL-78 core.

This IDE can also show flash and RAM usage — though it’s not plotted as nicely as it is in DAVE, which shows a pie chart of all symbols.

Specific to ARM parts, all Eclipse IDEs I tested support semihosting, which allows you to print characters to a console window during debugging. The characters are printed through the debugging interface, so there’s no need to configure a UART. MCUXpresso and CoIDE were the easiest Eclipse-based IDEs to configure with semihosting.

MCUXpresso has nice project properties panes for selecting a C runtime library (it includes Redlib in addition to Newlib Nano) as well as linker settings.

Atmel Studio

While many vendors have transitioned to Eclipse-based IDEs, Atmel went with a Visual Studio Isolated Shell-based platform starting with AVR Studio 5. I do a ton of .NET and desktop-based C++ development, so I expected to feel right at home in Atmel Studio when I first launched it. Unfortunately, Microsoft calls this product “Visual Studio Isolated Shell” for a reason — it’s simply the shell of Visual Studio, without any of the meat. The excellent IntelliSense engine that Microsoft spent years perfecting has been replaced by a third-party “Visual Assist” plugin that struggles to identify global variables, evaluate pre-processor definitions, or perform refactoring of items defined outside of the current file. The Toolchain editor is a near-clone of the Eclipse CDT one (no reason to reinvent the wheel), but it’s missing checkboxes and inputs for commonly-used compiler and linker options; one stunning omission is link-time optimization, which even when manually-specified as command parameters, doesn’t seem to work — odd, since Atmel is using a recent version of GCC.

My biggest issue with Atmel Studio is how incredibly buggy and unstable it has been every time I’ve used it in the last two years. I’m not referring to a specific installation on a specific computer: rather, every single time I’ve installed the software, I’ve fought with AVR Dragon drivers, a bad DLL file in the installer, programmer firmware issues, or, most recently, the software popping up the “Waiting for an operation to complete” message that prevents me from debugging any Atmel product without restarting my computer. Look, I get it: embedded firmware development is a highly-specialized task, so maintaining software that works reliably for such a small user-base can be challenging. Yet, every other vendor tools tested worked nearly flawlessly.

MPLAB X

While many vendors were moving from their proprietary Windows-only IDEs to an open-source Eclipse-based workflow, Microchip went a different route when they moved MPLAB 8 to the NetBeans-based MPLAB X in 2012.

On paper, NetBeans is a lot like Eclipse — it’s a Java-based IDE that was originally built to target Java development — but has since expanded to support C/C++ (along with web-centric languages: HTML, PHP, JavaScript, etc). Like Eclipse, NetBeans is open-source, and cross-platform. Unlike Workspaces in Eclipse, NetBeans doesn’t strongly enforce this paradigm — but it does offer “Project Groups” which has similar functionality. Both have good text-completion capabilities and source introspection; both have macro expansion.

I’ve used both for years, so I feel comfortable making this subjective claim: NetBeans feels simpler; Eclipse feels more powerful. A lot of this is a result of the UX design choices — Eclipse loads the window with tons of buttons, drop-down menus, and docked panes full of features. The entire IDE’s scale is much more dense than NetBeans.

Even though NetBeans has a lot of the same features, the UI is sparsely populated with the bare minimum of buttons you need to get your job done. Even the menu bar is light on options. Instead, advanced, rarely-used features are buried away inside sub-sub menus, or — somewhat more commonly — with keyboard combinations. As an example, I have no idea how to show the excellent Macro Expansion view in NetBeans, other than pressing Ctrl-Alt and clicking on a macro. Just to double-check, I went hunting for it in the menu bar, as well as digging through the context menus.

I think students and hobbyists might be drawn toward the simplicity of NetBeans, but I prefer Eclipse’s density, as it encourages users to go exploring and discover new features.

One big omission with the NetBeans text editor is the pop-up Source Hover code explorer that Eclipse has. Hover over any function in Eclipse, and the entire source code for that function pops up in a window you can scroll through. NetBeans will display code docs for functions, but if you want to look at the content of them, you’ll have to Ctrl-Click your way into the definition.

Microchip Customizations

I have to applaud Microchip for heavily customizing NetBeans into MPLAB X — an IDE that really feels like it was built for embedded development. The Project Properties window is all Microchip — you can select which tool you want to use to program the device (or the integrated simulator), as well as compiler options, include paths, and tool configuration.

Integrating the tool setup into individual project properties is useful for developers who switch between devices (and voltages!) a lot; but it may feel clunky to users who are always on the same device, using the same settings — every time they create a new project, they’ll have to go through the same tool configuration settings (debugger powers target, select correct voltage, blah blah blah).

One goof immediately visible is the redundant “Run Project” and “Make and Program Device” buttons. From what I can tell, these are identical (the manual says you can use either). However, from the bizarre Eclipse integrations I’ve seen, I’m used to dealing with UI oddities like this when dealing with embedded IDEs.

Microchip integrates a lovely dashboard view (visible in the bottom of the main photo), which indicates the device target, the compiler, and the memory usage.

The MPLAB X Options window allows you to add multiple instances of the Microchip XC compilers. As these compilers are quite expensive and don’t support new devices introduced after their release, it is common for shops to have several versions of XC compilers floating around — old, paid-for versions, and new code-size-limited versions they may grab to evaluate a new part before forking over dough.

Debugging

Debugging across all Microchip devices is much slower than in other IDEs. I’m not sure if this a limitation of the MCU, the PicKit 3, or the IDE (or all of the above). The default behavior of MPLAB X is to reconnect from the tool whenever starting a debug session, but you can shave a few seconds off the debug load time by instructing the IDE to maintain a constant connection to the tool by ticking the appropriate box in the Options dialogue. This really ought to be the default option, as few developers know of its existence, debugging is slow enough as it is, and there are very few usage cases where you’d want the IDE to disconnect from the debugger upon completing a debug session.

While there’s an option to display a Dissassembly view while debugging, this doesn’t come up by default, and even when you select it, it doesn’t seem to be “sticky” — you have to re-open the view every time you start a debug session. This is fine for Java or desktop C/C++ development, but for embedded microcontrollers, disassembly view is critical.

Another afterthought seems to be the peripheral register view, and peripheral register handling in general. Pull up the SFRs view, and you’ll be greeted by an extremely slow-loading window that’s painful to scroll through — especially on larger devices like the PIC32.

This view displays the address of the register (who cares?), the hard-to-understand short-form name, the hex value of the register, the decimal value of the register (who cares?), the binary value (hilariously long when working on 16- and 32-bit processors), and an ASCII interpretation of the register’s value (…why on Earth…?) — but what’s not in this view is: a human-readable name of the register, description of the register’s function, or a human-readable decoding of the register’s current value.

There’s a hover-over break-down of the register into its individual bits, but these use the same short-form datasheet names, don’t provide descriptions, and don’t contain enumeration values for multi-bit fields (or any explanation at all).

Basically, you’re going to have to have your datasheet open so that you can hand-decode the values of these registers, as Microchip doesn’t seem interested in integrating that style of documentation into their IDE.

These are the things that drive me nuts — it’s 2017; all of this data is already computerized. All they need to do is add a bit more functionality to their view (which many Eclipse-based vendor tools have), and all the sudden, the SFR view becomes ten times more productive to use.

For higher-end PIC32 and PIC24 devices, there’s a semihosting-like feature Microchip calls appIO (though it only works with their pricy ICD debuggers). There’s also runtime variable watch and instruction tracing, but that’s only supported by the really pricy RealICE debugger.

Holtek HT-IDE3000

Anyone who complains about IDE bloat and download registration walls should immediately check out Holtek HT-IDE3000 (yes, that’s what it’s called). Visit their website, click the download link, and a small 90 MB ZIP file starts downloading.

They do make you type in the serial number on your $40 eLink debugger before using it (likely to deter debugger cloning), but that’s the only registration you’ll see.

Once you get the IDE up and running, the first thing you’ll notice is its Office 2003 look. It ain’t pretty, but it’s functional. All the menu buttons you see are completely customizable through a drag-and-drop editor, which I didn’t see in any other IDE tested.

The best part about having such an old-school IDE is that this thing screams: zero-lag text-editing, immediate hover-over pop-up tooltips with function source code (like the Hover Source feature in Eclipse, but much more lightweight), and zippy project building. I did a double-take when looking at RAM usage: HT-IDE3000 uses 9.9 MB of RAM. Yes, that decimal point is placed properly. Insane.

This comes at the expense of code intelligence features. IDE3000 can go to variables, macros, and function definitions; auto-complete variables and functions; and display pop-up source listings of functions when you hover over them. But there are no real macro expansion capabilities or code outline views, and the text completion is pretty basic: it recognizes C types and functions but doesn’t seem to like remembering SFRs or other types of things that get #define’d. There’s no macro expansion, and the text completion isn’t intelligent — it’s only a selectable list of all symbols visible in the given context.

This turns out to be good enough for the sorts of projects these small devices tackle. It’s not like you’re going to be juggling around more than a few dozen variables, and I’d bet 90% of the code-bases that target these parts comprise a single C file.

What you do get with Holtek is deep integration with the device you’re targetting. There’s built-in functionality for interacting with EEPROM on the device, programming using USB bootloaders present in some of their chips, as well as a built-in OTP workflow for generating the appropriate files Holtek needs to manufacture your parts (since most Holtek devices sold are OTP parts).

As soon as you build a project, IDE3000 will jump into a debugging session in the background — even as you continue editing and building. This unconventional experience lures you tap that “Build” button often, and gush at your progress. As most firmware projects targetting this microcontroller will hover in a while() loop until interacted with, while you’re editing, you can double-click on a line of code to set a breakpoint, immediately check out what’s going on, make changes, and continue editing — without the manual process of uploading code, switching to a debug perspective, waiting for the image to flash, start running, and a breakpoint hit.

Code building and uploading are so fast, it almost feels like you’re in a simulator or a PC-based environment. The debugging views are basic but fully-functional. The peripheral register view will break down each register into the bits that are set and cleared — but I would have preferred hover-over descriptions of the registers and the bits they command.

While Holtek could modernize the UI, you’ll get no complaints about stability from me: I didn’t have a single crash, bug, hiccup, or driver installation kerfuffle the entire time I used IDE3000 — I tried it on both Windows 7 and Windows 10 Fall Creator’s Update, and the experience was identical. Holtek accelerates an update schedule by squashing bugs and adding new features several times a year — in fact, they had two updates over the course of my writing this review, which is more than any other IDE.

ST Visual Develop (STVD)

STVD is the official IDE for the STM8. Its UI feels even older than IDE3000, but it’s a bit better when it comes to text-editing capabilities. Its code completion is invoked with the familiar ctrl-spacebar shortcut, and instead of just displaying all symbols discovered, it seems to default to symbols that make sense in the present context. It knows enough about C to walk into pointer-referenced structs, but unfortunately, has no pop-up documentation or source-code browsing when you hover over a method.

Unfortunately, it feels slow. Code completion can take a second or longer to pop-up in larger projects, rearranging toolbars was sluggish, going to the definition of a symbol took time to load the editor window, and menus were slow to appear.

Debugging was also worse than average. Breakpoints can’t be set while the target is running — you must manually pause it, add your breakpoint, and then continue execution. The peripheral registers view was also underwhelming: while it has descriptions of registers, it only displays the whole value of the register — in hex format — without giving you a per-bit breakdown useful for catching bit math bugs in your code. Yet again, you’ll be resigned to manually cross-checking register values with values from the datasheet — one of my biggest pet peeves.

PSoC Creator

Cypress PSoC Creator is the official — and only — development environment available for Cypress’s line of PSoC devices, and encompasses a project management system, text editor, schematic capture tool, code generator, and debugger.

PSoC Creator’s interface lurks out of the last decade with its Office 2003 costume, and its .NET codebase ensures porting it to Linux or macOS will be an arduous task that Cypress, itself, resigns as unlikely.

PSoC Creator feels snappier and lighter-weight than Eclipse or NetBeans-based environments while maintaining 90% of the IDE features and code introspection abilities. Loadable workspaces weave together one or more projects — any number of which can be open at the same time — exactly as Eclipse does.

A Code Explorer pane leads you to variable and function definitions with a single click; while the text competition digs deep into functions, macros, and variables to bubble up suggestions with the familiar Ctrl-Spacebar shortcut.

Cypress needs to refashion hover-over tooltips into what Eclipse or IDE3000 does; as it stands, the tooltips print the name of the function and its parameters — there are no code docs, and no quick source view you can use to peek at a function’s implementation.

There’s one-click documentation access, plus a resource meter for monitoring both flash and SRAM usage — but also peripheral usage, which shows you exactly how integrated PSoC Creator is with the underlying PSoC hardware.

The 500 lb gorilla in the PSoC Creator ecosystem — the schematic capture configurator tool — is a major omnipresence in PSoC Creator — but I’ll save that discussion for Code Generator section of this review page.

Flash load times were unimpressive (though not the worst seen in my round-up). A basic, 1.4 KB program took 7.82 seconds to load and run to main(). Filling flash up to 16 KB took 10.84 seconds.

PSoC Creator jostled my brain when I strained to reduce power consumption in my DMX project. I couldn’t find a standard list of all peripheral registers which would allow me to cross-reference bits from the datasheet — instead, you can select from the peripherals you’ve configured in the schematic capture view, which initiates a limited view of that specific peripheral (and only displaying the registers associated with its current function).

As this is an ARM microcontroller, you may intuit that a cross-platform GCC-based toolchain would be easy to set up — but because of the proprietary configuration bitstream required when flashing these devices, you’ll need to use Cypress’s tools to fit your design to the processor and merge this bitstream with your application binary code.

While Cypress stops short of supporting Linux or macOS outright, PSoC Creator can export projects to common cross-platform Eclipse or Makefile-based environments — as well to industry stalwarts µVision and IAR.

But if you’re on Windows, I wouldn’t bother — this is as close to an Eclipse/NetBeans workflow you’ll get with a proprietary IDE, and it’s definitely good enough to compete with either.

Keil uVision